Это вторая статья в серии статей о функциях и службах Microsoft Azure. Если вы просто присоединяетесь к нам, вы можете взглянуть на предыдущую статью о хранилище BLOB-объектов Microsoft Azure, в которой рассказывается, как начать работу с учетной записью Azure Storage, инструментами разработки, использованием SDK Azure Storage Client, а также с точки зрения цен и производительности, которые мы не будем повторяться.

В следующей статье этой серии мы рассмотрим службу хранения таблиц в Microsoft Azure. Я решил разбить его на две части, так как мы многое расскажем. В первой части мы рассмотрим работу с базами данных NoSQL, различия между реляционными базами данных, разработку и создание таблицы Azure, а также все доступные операции для сохранения данных. Во второй части мы рассмотрим запись запросов к нашим таблицам, повторение неудачных операций, параллелизм и, конечно же, безопасность.

Давайте приступим к делу и посмотрим, что мы можем узнать о работе с таблицами Azure:

- Что вы имеете в виду, что это не база данных отношений?

- Разжигая наши столы

- Самый важный вопрос; Как оформить стол?

- Работа с таблицами Azure (операции с таблицами)

- Пакетные операции с транзакциями группы объектов

- Запросы: эффективные и неэффективные

- Крысы! Мы попали в ловушку (работа с политиками повторных попыток)

- совпадение

- Безопасность

- Вторичные индексы

Правильный инструмент для правильной работы

Как и в случае с большинством инструментов, будь то отвертка в вашем наборе инструментов, газонокосилка в гараже или IDE разработки программного обеспечения, которую вы используете, каждый из них имеет свое конкретное применение. Это также относится и к хранилищу таблиц Azure. Существует определенный набор дел, который делает хранилище таблиц Azure подходящим (читай: он не идеально подходит для каждой работы).

Когда у нас есть возможность работать с большими объемами данных (читай: терабайты), которые не имеют сложных отношений, и нам требуется чрезвычайно быстрый поиск и постоянство, а также возможность простого масштабирования, таблицы Azure сияют. Это происходит из-за сочетания конструктивных особенностей таблиц Azure, которые при правильном проектировании обеспечат готовые, масштабируемые таблицы, которые разделяются при большой нагрузке, а также чрезвычайно эффективную загрузку данных и постоянную пропускную способность.

Прежде чем углубляться в конкретные детали таблиц Azure, давайте быстро пройдемся по сравнению таблиц Azure и нашей популярной базы данных отношений.

Что вы имеете в виду, что это не база данных отношений?

Существует высокая вероятность того, что вы знакомы с реляционными базами данных (RDBMS) и продумываете эти термины, когда речь идет о хранении и структурировании данных в базе данных. Однако Azure Table Storage — это не реляционная база данных, а база данных NoSQL, поэтому существует совершенно другой подход, который необходимо использовать, когда мы собираемся разместить наши данные в Table Storage Azure.

Когда мы думаем о реляционных базах данных, мы думаем об отдельных схемах таблиц, отношениях таблиц с внешними ключами и ограничениями, хранимых процедурах, столбцах и строках. Всем этим мы не обременены при работе с таблицами Azure. Это одна из положительных характеристик баз данных NoSQL, которые позволяют нам сосредоточиться исключительно на данных. Конечно, те же самые характеристики — то, что обеспечивает реляционную базу данных ее преимуществами.

Поскольку таблицы Azure не идеально подходят для каждого случая, когда нам необходимо сохранить данные, давайте рассмотрим некоторые основные различия между таблицами Azure и реляционными базами данных, чтобы помочь провести эту определяющую линию.

Таблицы Azure и реляционные базы данных

Это не исчерпывающий список различий, но важные различия, которые необходимо учитывать для принятия правильных решений при работе с таблицами Azure, и в конечном итоге они будут играть важную роль при разработке таблиц Azure:

- Другой словарь: в традиционном словаре реляционных баз данных мы делаем ссылки на столбцы и строки таблицы. Однако в мире NoSQL мы храним сущности (строки) со свойствами (столбцами) в наших таблицах.

- Связи между таблицами не определены. Отсутствие связей означает, что вы не собираетесь строить отношения между другими таблицами и хранить их первичные идентификаторы. Это связано с тем, что вы не можете выполнять объединения в других таблицах NoSQL. Существует способ хранить объекты с другой схемой в одной и той же таблице, чтобы держать связанные объекты под рукой.

- Все схемы созданы одинаковыми: в отличие от таблицы реляционной базы данных, в которой есть связанная определенная схема, которой должны придерживаться все строки, в таблицах Azure в одной таблице могут храниться объекты, имеющие другую схему. Чтобы продвинуться дальше, мы можем рассматривать таблицы Azure как контейнер сущностей, в то время как сущности — это просто пакет пар имя / значение. Каждый объект в таблице может содержать разные пары имя / значение данных. Единственным общим знаменателем, общим для сущностей таблицы, являются три обязательных свойства (ключ разделения, ключ строки и отметка времени), два из которых мы рассмотрим более подробно.

- Индексы ограничены: в реляционной базе данных у нас есть возможность создавать индексы, чтобы помочь с эффективностью запросов, таких как вторичный индекс. У нас нет такой роскоши, и запросы к таблицам Azure по свойствам сущностей, кроме обязательных свойств, упомянутых ранее, могут быть очень неэффективными. Мы поговорим об этом подробнее, когда рассмотрим важные факторы при разработке наших таблиц.

Если у вас нет опыта работы с реляционными базами данных, возможно, многое из вышеперечисленного не имеет особого смысла, или если вы пришли из NoSQL, я проповедую хору. Без дальнейшей бомбардировки технических деталей давайте углубимся и начнем работу с таблицами Azure. Когда мы туда доберемся, мы рассмотрим наиболее важный аспект таблиц Azure; дизайн.

Разжигая наши столы

В качестве напоминания вы можете увидеть предыдущую публикацию хранилища BLOB-объектов Azure для получения настроек учетной записи хранения. После настройки с помощью учетной записи хранилища Azure мы можем продемонстрировать, как просто выгрузить данные в таблицу Azure за 2 простых шага:

Шаг 1 (создать таблицу)

CloudTable table = _client.GetTableReference(tableName); table.CreateIfNotExists();

Шаг 2 (Создать и сохранить динамический объект таблицы)

var dynamicEntity = new DynamicTableEntity

{

PartitionKey = "Games",

RowKey = "Outside",

Properties = new Dictionary<string, EntityProperty> { { "Name", new EntityProperty("Corn Hole")} }

};

var tableOperation = TableOperation.Insert(dynamicEntity);

var result = table.Execute(tableOperation);

Теперь это было только для демонстрационных целей, и мы забегаем вперед. Но вы можете видеть, как легко сохранять данные в таблице Azure, просто используя инструменты, уже доступные и не имевшие предопределенных пользовательских объектов для размещения данных, которые я хотел сохранить. Начиная со следующего раздела, мы перейдем к созданию таблиц и сохранению данных. Но это подводит нас к наиболее важному моменту при работе с таблицами Azure — дизайну

Самый важный вопрос; Как оформить стол?

Если вы имели какое-либо отношение к разработке приложения с базой данных базы данных, вы, вероятно, видели, как требования обычно начинаются с наличия некоторых данных, которые необходимо сохранить. Поэтому таблица предназначена для размещения этих данных. Только после этого эта мысль вкладывается в то, как эту информацию нужно искать и использовать. Звучит знакомо?

Что касается таблиц Azure, то наиболее важный вопрос, на который нужно ответить, прежде чем вы будете готовы сохранить данные в хранилище таблиц, — что вы собираетесь делать с этими данными?

Мы не можем позволить себе ответить на этот вопрос после того, как уже сохранили данные в Table Storage. Это связано с тем, что для получения высокоэффективных скоростей извлечения и возможностей горизонтального масштабирования, предоставляемых хранилищем таблиц Azure, нам необходимо сначала принять важные решения о том, как мы собираемся структурировать данные в таблице Azure. Помните, что эти объекты, которые мы храним, не схематизированы, но это не значит, что они не структурированы. Итак, давайте разберем, какие важные решения мы должны принять для структурирования наших данных.

Ключ раздела и ключ строки

You have heard me mention earlier that with the NoSQL database we are storing entities that are simply bags of name/value pairs of data. But, in addition, there is a common denominator between all entities in all tables which are the required properties referred to as the Partition Key, Row Key and Timestamp properties. For now, the most important of these are the Partition and Row key.

Partition Key

So, how does Azure Tables automatically afford out-of-the-box scale out capabilities when data is under heavy load? This is where the Partition Key becomes probably the most important of the two properties we are currently evaluating. The partition key is a string property that is required for all entities. Unlike a primary key on a relational database table, it isn’t unique. But, it is the key (no pun indented) to allowing Azure to determine where divisions can be made in a single Azure Table and partition entities that are part of that single partition to its own partition server.

Even though every partition will be served by a Partition Server (that can be responsible for multiple partitions), it is when partitions under heavy load can be designated its own Partition Server. It is this distribution of load across partitions that allow your Azure Table Storage to be highly scalable.

So let’s stop for a second and think about this; if you design your table with a single partition key. Such as in the case of a table that stores store product information, but you decide to make your partition key “products” and all entities fall under this single partition. How can Azure partition your data so that it can automatically scale out your table for efficient performance? It can’t. All 50,000,000 shoe products you store fall under the same partition.

Unfortunately, partition servers also create a boundary that will directly affect performance. Therefore, in contrast to the all-in-one partition approach, creating unique partitions for every entity is a pattern that that will cost you the ability to perform batch operations (discussed later), incur performance penalties for insert throughput as well as when queries cross partition boundaries.

Finally, sorting is not something that is controlled after the data has been persisted. Data in your table will be sorted in ascending order first by the partition key, then sorted ascending by the row key. Therefore, if sort is of importance, you will need to determine the ways partition key’s and row keys are defined. A good example is how 11 would come before 2 unless padded with 0’s.

Row Key

The row key is also a string property that must be unique within the partition. As I mentioned earlier, the idea of secondary indexes has been a lacking feature in Azure Tables. However, the combination of the Row and Partition key creates an entities primary key and forms a single clustered index within a table.

Row keys also provide a second applied ascending sort order after the applied ascending sort order of the partition key. Therefore, depending on your circumstances, further thought might be required on how you want data to be sorted when retrieved.

Therefore, the decision you need to make is how will the data be queried, what are the common queries that you expect to be made? Based on that answer, you need to determine how the data can be grouped into partitions. The following is a list of guidelines that is not exhaustive, but can help making table design decisions easier.

- Determine what the common queries will be against the data.

- Based on those common queries, determine how the data can be grouped (partitions).

- Avoid over-sized partitions that would hinder the ability for scalability.

- Avoid extremely small (single entity) partitions that would negate ability for batch partitions and hinder insert throughput

- Consider your sort order requirements when determine exact partition and row naming

We will continue to look at all these points as we cover table operations to persist and query the data. There is more to consider that just these starting points, such as how inefficient queries based on table properties are that force a full table scan. Since this article is not strictly about designing tables, it would recommend reading this helpful MSDN article for more on how different partition sizes affect queries as well as more information on table design.

The most important question you need to ask before using or designing your Azure Tables, how will the data be queried.

Working With Azure Tables (Table Operations)

So now that you have decided how you want to structure your tables and you’re ready to start persisting data, let’s get down to business. We are utilizing the .NET Azure Storage Client SDK which we fully covered in the previous Azure Blob Storage article. There, you can learn your different options for acquiring the Storage Client SDK. Despite having covered the details about utilizing your Storage Account’s Access keys, I am going to cover that small part again here.

Therefore, assuming you up and running with the .NET Storage Client SDK, let’s start with the minimal requirements to get started with working with an Azure Table.

Minimal Requirements

The table itself is associated with a specific Azure Storage Account. Therefore, if we want to perform crud and query operations on specific table in our storage account, roughly, we will be required to instantiate objects that represent our storage account, a specific table client object within our storage account and finally, a reference to the table.

Keeping that in mind, the minimal requirements to work with a table would go something like the following:

- First, you create a storage account object that represents your Azure Storage Account

- Secondly, through the storage account object, you can create a Table Client object

- Third, through the Table Client object, obtain an object that references a table within your storage account.

- Finally, through the specific table reference, you can execute table operations

CloudStorageAccount account = new CloudStorageAccount(new StorageCredentials("your-storage-account-name", "your-storage-account-access-key"), true);

CloudTableClient tableClient = account.CreateCloudTableClient();

CloudTable table = tableClient.GetTableReference(tableName);

You can see for the CloudStorageAccount object we are creating a StorageCredentials object and passing two strings, the storage account name, and a storage account access key (more on that in a moment). In essence, we create each required parent object, until we eventually get a reference to a Table. Despite having covered setting up a CloudStorageAccount object in the previous linked post, below is a refresher on utilizing your storage’s access keys.

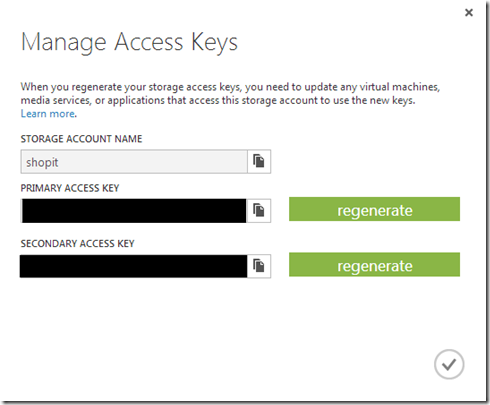

Storage Key’s

As mentioned earlier, creating the storage credentials object, you need to provide it your storage account name and either the primary or secondary base64 key. This is all information we covered in the article on blob storage, but you can obtain this information from your Azure Portal by selecting “Manage Access Keys” under “Storage”, where it will list your storage accounts you have created.

Yes, as you might have guessed, the access keys are the keys to the kingdom, so you would not want to pass this information out. However, for the sake of simplicity, I am demonstrating the bases for what we need in order to access a specific table storage account. We will take a closer look at security later on in this article.

A better approach to acquiring a CloudStorageAccount would be to look at the “Windows Azure Configuration Manager” NuGet package that can help with abstracting out some of the overhead of creating an account.

Creating Tables

So as we saw earlier, in order to create a table we have to have a reference to a table. Azure has some table naming rules that we will need to follow. Once we have a reference to a table there are a few different ways to create a table.

CloudTable table = tableClient.GetTableReference(“footwear”); table.Create();

Or if your aren’t aware of whether or not it has been created yet.

CloudTable table = tableClient.GetTableReference(“footwear”); table.CreateIfNotExists();

You might find code that repeatedly makes CreateIfNotExists method calls anytime they want to interact with Tables. Recall, when we talked about pricing in the Azure Blob Storage, one of the aspects of billing is that it is based on transactions. The above create example conducts multiple transactions to validate the table exists. Therefore, you could easily incur unneeded transactional overhead especially if you are doing this on every attempt to work with a table. For the creation of tables, I would advice you create the tables ahead of time when you can.

Insert

In this article, we are going to look at two of the main ways to persist data to your table. One is through explicitly defining a class in your application that implements the TableEntity class. While a more implicit, second way to persist data to your table is through the use of the DynamicTableEntity.

We can start off by defining classes to represent our table entities, which will derive from TableEntity, creating a new instance of an entity we want to persist, followed by the table operation we want to carry out (insert) and finally executing it against the table reference we have:

public class Footwear : TableEntity

{

public double Size { get; set; }

public string Brand { get; set; }

public string Name { get; set; }

public string Gender { get; set; }

}

Footwear atheleticShoe = new Footwear()

{

PartitionKey = "Athletics",

RowKey = "038389_7_women",

Brand = "AeroSpeed",

Size = 7.5,

Gender = "women",

Model = 38389

};

TableOperation tableOperation = TableOperation.Insert(atheleticShoe);

TableResult result = table.Execute(tableOperation);

As you might have noticed, were using a combination of the model#, size and gender for the Row key. Depending on what common queries you determine will be used for data retrieval from your table, this key can have significant importance on available unique keys within a partition and sort order.

TableResult provides an encapsulation of two important pieces of information. One, if you are performing a query, the TableResult.Result will be the returned entity(s). It also encapsulates the HTTP response. In the previous article on Blob Storage we learned that the Azure Storage services is a REST API. This HTTP response can provide more insight to the results of a table operation. Going along in this article you’ll see that I capture the returned result just for completion, but it isn’t always necessary.

De-normalization (A Short Commercial Break)

Since we are on the topic of inserting data in our tables, this is a good as any time to talk about a common problem with NoSQL databases; de-normalization. We have already pointed out the significance to understanding how your table data will be queried and making the correct table design decisions ahead of time. If your decide that you will have more than one dominant query against the data, a common solution is to insert the data multiple times with different Row key’s within a partition. This will allow for multiple efficient queries to be performed against the same data.

A simple example using our Footwear table, where we might want to query by size or by model#, we can prefix the Row key and insert the same data twice. The prefix will allow our application to distinguish between the different values:

| Partition Key | Row Key |

| Athletics | size:07_38389_women |

| Athletics | model:038389_7_women |

The above example is just a possible solution, not a de-facto method for creating Row key’s, only a means for you to think about different scenarios of persisting entities. But as mentioned earlier, this is a case where we have de-normalized our table by duplicating the data. This isn’t the only case where de-normalization of your data might be required or be a possible solution to a problem.

Another common problem is the need for our application to work with different entities together, where the data is different, but closely related. If we can’t define relationships between entities, we can use the un-schematized characteristic of NoSQL entities to save associated entities in the same table. A common example would be an application that works with a Contact entity which requires working with Address entities. Saving them all in the same table under the same partition would afford the ability to perform a batch transaction when saving a Contact and its Addresses (we’ll talk about batch transactions).

Updates

Updating existing properties on an existing entity in our table isn’t the only kind of update that can occur. Because entities are no more than a bag of key/value pairs and there is no master schema that an entity has to follow, there is no reason why an update to an entity entity where the PartitionKey and RowKey match, might contain a complete different set of properties and values. Because of this, there are a few ways we might want to handle the update of an entity. This is where Merge or Replace come in play.

Merge

If we make changes to existing properties on an existing table entity, Merge will accommodate updating the entity where the PartitionKey and RowKeyexists. But in the case where we have an entity with different properties and want to update an existing table entity without losing those existing properties, we can merge the two entities. This will result in a table entity with the combined properties as well as any changes to shared properties.

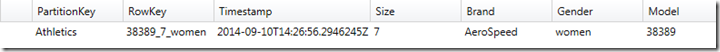

Before any changes we might have the following table entity:

TableOperation query = TableOperation.Retrieve<Footwear>("Athletics", "38389_7_women");

TableResult results = table.Execute(query);

Footwear footwear = (Footwear)results.Result;

DynamicTableEntity newFootwear = new DynamicTableEntity()

{

PartitionKey = footwear.PartitionKey,

RowKey = footwear.RowKey,

ETag = footwear.ETag,

Properties = new Dictionary<string, EntityProperty>

{

{"PrimaryColor", new EntityProperty("Red")},

{"SecondaryColor", new EntityProperty("White")}

}

};

TableResult result = table.Execute(TableOperation.Merge(newFootwear));

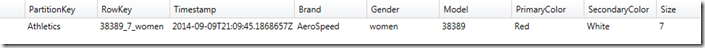

In this example, we are loading an existing entity by the PartitionKey and RowKey. I use a DynamicTableEntity to demonstrate where we want to Merge new changes to an existing entity by performing a MergeTableOperation. We can see the final result is the combination of the existing properties as well as the new PrimaryColor and SecondaryColor properties.

This is just a note that the previous DynamicTableEntity wasn’t required to make changes to an existing entity. But you’re applications entity POCO’s might change, while you want to retain existing property information, or possibly your application has a split persistent model that that needs to merge the data in an entity.

Replace

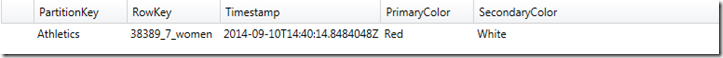

Perhaps, we don’t want to retain previous information and we completely want to alter an existing entity. Replace lets us completely Replace the existing entity where the PartitionKey and RowKey exists. Taking the previous example we conduct a replace TableOperation.

TableOperation replaceOperation = TableOperation.Replace(newFootwear); TableResult result = table.Execute(replaceOperation);

And we can see how it has completely altered the structure and data for the existing table entity.

Because I have seen some confusion out there of the differences, just remember, Merge retains any current properties and data on an existing entity (data retention). While Replace does just what the name implies, replaces the entity completely with the new entity (data loss)

You can’t modify the existing PartitionKey and RowKey. In the case where this is needed, a simple insert of a new entity with the desired PartitionKey and RowKey and a deletion of the old entity will be required.

Delete

Using the same Footwear entity example from above, we can simply delete an entity doing the following:

TableOperation deleteOperation = TableOperation.Delete(footwear); table.Execute(deleteOperation);

Be aware, that though it might appear you can simply pass in a newly constructed entity with the correct PartitionKey and RowKey, It will require that you provide a value for the ETag property, which we haven’t talked about yet and is covered under the Concurrency section. You can force the update by using a wildcard “*” or first load the entity from the Table and pass it as the entity in the delete operation.

Hey Wait! There’s More

The Azure Table REST API provides a couple binary operations if we aren’t sure if an entity exists. Through the CloudTableClient we can either InsertOrMerge.

TableOperation insertOrMergeOperation = TableOperation.InsertOrMerge(newFootwear); TableResult result = table.Execute(insertOrMergeOperation);

Or we have the option to InsertOrReplace.

TableOperation insertOrReplaceOperation = TableOperation.InsertOrReplace(newFootwear); TableResult result = table.Execute(insertOrReplaceOperation);

In all the above operations, we were only ever working with one table operations at a time. Azure Tables provide a way to perform transactions through the means of Entity Group Transactions.

Batch Operations with Entity Group Transactions

A tip that I pointed out earlier as well as the last post on Blob Storage, is that there is an associated cost with each transaction. You might have observed that all previous operations have been single operations involving one table entity. In the case were our application has a relationship between entities that are being stored in the same table under the same partition key we might want to perform a batch operation that allows a number of atomic operations to occur successfully or none occur at all.

Entity Group Transactions (EGT) is Azures answer to such a scenario in which we want to perform a series of atomic operations at one time and ensure they all are successful or they all fail. There are a number of restrictions involving EGT’s. The primary restriction is that all entities involved in a group transaction require having the same Partition Key, which we mentioned earlier when we were discussing how to design our tables. But let’s quickly look at what those restrictions are when using EGTs:

- All entities involved must share the same Partition Key.

- No more than 100 entities can be involved in a single group transaction.

- An entity can only appear once within a group transaction and there can only be one operation against the entity.

- A group transaction payload can total no more than 4mb.

The TableBatchOperation actually is a collection of single atomic TableOperations which we went over earlier.

TableBatchOperation batchOperations = new TableBatchOperation

{

TableOperation.Insert(new Footwear

{

PartitionKey = "Athletics",

RowKey = "model:038389_7_women",

Brand = "AeroSpeed",

Size = 7,

Gender = "women",

Model = 38389

}),

TableOperation.Insert(new Footwear

{

PartitionKey = "Athletics",

RowKey = "size:07_38389_women",

Brand = "AeroSpeed",

Size = 7,

Gender = "women",

Model = 38389

})

};

table.ExecuteBatch(batchOperations);

This was a simple operation. But a more common example might be that we have a number of entities that we want to persist and we need to ensure that all batched TableBatchOperation(s) share the same PartitionKey and the count does not exceed 100 per operations. Suppose we had more than 100 Footwear we had to process:

IEnumerable<Footwear> footwears = GetFootwear(); //Get some unknown number of footwear objects

TableBatchOperation batchOperations = new TableBatchOperation();

foreach (var footwearGroup in footwears.GroupBy(f => f.PartitionKey))

{

foreach (var footwear in footwearGroup)

{

if (batchOperations.Count < 100)

{

batchOperations.Add(TableOperation.InsertOrReplace(footwear));

}

else

{

table.ExecuteBatch(batchOperations);

batchOperations = new TableBatchOperation {TableOperation.Insert(footwear)};

}

}

table.ExecuteBatch(batchOperations);

batchOperations = new TableBatchOperation();

}

if (batchOperations.Count > 0)

{

table.ExecuteBatch(batchOperations);

}

Here, we group all the entities by partition key, then process all groups in batches of 100. We then ensure if there are any batched operations left over at the end that those are processed.

TableBatchOperation(s) provides very efficient persistence of your entities and it shows. Azure’s targeted batch processing speed is 1000 batch transactions per second. So use it when you can.

Conclusion

We have managed to cover everything from understanding Azure’s NoSQL Table Storage, differences from relational databases, designing tables, performing persistence operations and finally, how to take advantage of batch operations. This was a lot to cover, but it’s far from over. The very next installment due out very soon will cover the second half of the listed agenda at the beginning of this article. This includes writing queries against the data, retrying failed table operations, concurrency and security. So stay tuned…